Degenerative AI: Assessing the Risks and Impacts of Self-Censorship in Generative AI

Navigating the Uncharted Waters of AI

Dystopian realities of sentient AI, as often depicted in cinema, may seem far-fetched today. Yet, the risk of a subtle yet significant shift in information filtering by AI is very real — a lesson taught by history.

In the fast-evolving scenery of generative artificial intelligence, we stand at a pivotal crossroads where innovation intersects with ethics, and fascination grapples with apprehension. This landscape raises thought-provoking questions: Will AI render our current job roles obsolete in the near future? Is the burgeoning energy demand of these technologies in line with our environmental commitments? And amidst the torrent of information, how will we discern the truth from the artificially generated disinformation?

While the discourse around AI’s societal and economic impact is robust, the literature on its self-censorship mechanism is scanty. Generative AI stands at the forefront of technological innovation. Its primary purpose is to produce content that not only informs and educates but also sparks imagination and expands our intellectual horizons. The potential of generative AI is vast, ranging from assisting with mundane tasks to enhancing human creativity. However, there lies a less explored, more opaque aspect to this technological marvel — the risk of it degenerating from its noble purpose.

This article delves into the paradoxical nature of generative AI, exploring how, in its quest to inform and inspire, it faces the risk of degenerating into a tool of disinformation and bias. We venture into a complex maze where corporate strategies, ethical dilemmas, and the authenticity of information converge. It’s a journey from the luminous potential of generative AI to the murky depths of misinformation, where AI, driven by various pressures and incentives, might inadvertently stray from its path of enlightening users to one where it constrains, filters, and distorts the very information it is designed to proliferate. The term ‘Degenerative AI’ thus encapsulates this dual reality — the contrast between its generative capabilities and the potential degeneration into a conduit of biased or incomplete information.

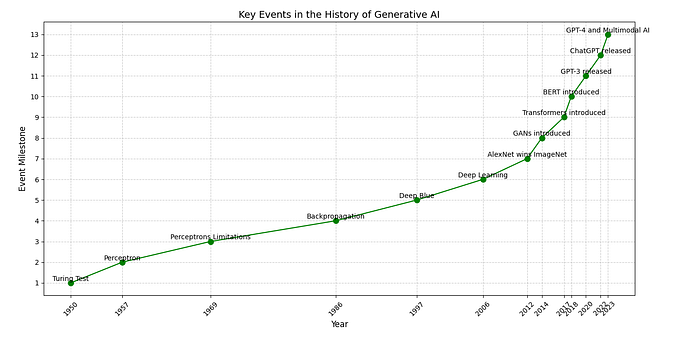

This article begins by laying a foundational understanding with a historical contextualization of censorship in the Information Age. We highlight how this practice has evolved, transitioning from traditional media into the digital realm. Following this, we delve into the global trends of self-censorship, illuminating how different incentives and pressures have shaped content across various media platforms. We then transition to examining the current landscape of content moderation, where the focus is on how AI models navigate the delicate balance between user safety, regulatory compliance, and the preservation of free expression. Finally, we assess the risks of a more opaque and potentially harmful form of self-censorship in generative AI. This section studies the potential concerns of a shift in chatbots and image-generating AI content toward directions influenced by the multifaceted terrain of stakeholder interests. Such an environment can subtly, yet significantly, influence the content and behavior of AI models, raising crucial questions about the integrity and impartiality of AI-generated information.

Censorship in the Information Age

Censorship has been an integral part of the communication landscape throughout history. From the era of international newspapers and the advent of cinema to the rise of television networks and the expansive digital realm of the Internet, the practice of tailoring and controlling content has been ubiquitous. While the form and extent of censorship vary across nations, it is a phenomenon that transcends geographical boundaries.

However, in the realm of generative AI, we encounter a more nuanced form of censorship: self-censorship. Unlike traditional censorship, where content is suppressed by external authorities, self-censorship involves an internal decision to withhold information, often motivated by strategy, fear, or deference. This form of censorship is particularly elusive and challenging to detect, as it subtly erodes the boundaries of free speech. Yet, for the audience, whether the censorship is externally imposed or self-induced, makes little difference, as both eventually constrain the free flow of information.

In the digital age, the implications of this self-censorship are profound. It calls to mind Kurt Lewin’s 1943 concept of ‘gatekeeping,’ which is increasingly relevant as AI models act as modern gatekeepers. Generative AI systems regulate the flow of information, subtly influencing public perception and decision-making processes. This becomes a significant concern when such self-censorship hinders media pluralism or restricts access to diverse knowledge, ideas, and perspectives. Consider, for example, an AI news aggregator that systematically avoids topics deemed politically sensitive or controversial. This AI, while not directly censored by any authority, could self-censor content based on programmed biases or algorithms designed to maximize user engagement and avoid controversy. Such actions, though not overtly oppressive, effectively shape public discourse by narrowing the range of topics presented to users, thus limiting their exposure to diverse viewpoints and critical issues.

As we delve deeper into the time of generative AI, understanding the intricacies of AI self-censorship becomes crucial. It’s not just about what information is presented, but also what is left unsaid, and the impact of these omissions on the broader discourse.

Self-Censorship for Compliance and Access: A Mass Media Trend

In understanding the nuances of self-censorship within generative AI, it’s relevant to consider its prevalence in traditional information gatekeepers. This global trend, shaped by political, economic, and cultural contexts, mirrors potential developments in AI, revealing the complexities of ethical information dissemination amidst market pressures. While some forms of self-censorship are essential for honoring cultural sensitivities and adhering to legal standards, other manifestations can be more insidious and detrimental.

Social and Economic Pressures

Self-censorship is often driven by a complex interplay of social and economic influences. In critical areas such as public health and climate change, reports indicate that scientific publications have faced delays, alterations in wording, and even modifications of data and findings. These changes are made to appease funders or align with prevailing socio-economic narratives, highlighting the extent to which external interests — including financial incentives and societal expectations — can jeopardize the integrity of crucial information.

In the realm of news media, a multitude of factors contribute to self-censorship. Beyond subtle social influences, such as the desire to align with prevailing public sentiments or avoid controversy, the influence of ownership structures on content is significant. Take, for example, the case of Vincent Bolloré in France, where his media holdings have faced accusations of tailoring news to suit corporate and personal interests. Similarly, Rupert Murdoch’s media empire in the English-speaking world has been scrutinized for bias, demonstrating how content can be subtly crafted to align with specific agendas, thus shaping public perception in profound ways. Such dynamics demonstrate the far-reaching impact of self-censorship, stemming from both economic imperatives and social dynamics, as well as their profound effect on how information is presented and perceived.

Market Demands, Cultural Sensitivities, and the One-Size-Fits-All Approach

This phenomenon of self-censorship extends beyond mere compliance with funding and ownership. The relentless push toward globalization and the pursuit of economic growth has prompted many industries to embrace a one-size-fits-all approach. This strategy, while economically efficient, compromises on originality, impartial content, and socially daring themes.

Consider Hollywood’s approach, which often involves sanitizing contents and themes for broader market appeal. Such modifications prioritize marketability, often at the expense of artistic integrity. The tech sector, particularly GAFAM companies, mirrors this pattern as well. Products and services are frequently altered or withdrawn to suit different international market regulations and cultural sensibilities, showcasing a form of self-censorship that caters to global business strategies.

The Ethical Dilemma: Balancing Access and Integrity

These sector-wide examples provide a glimpse into the delicate balance that must be navigated by media ecosystems, and by extension, generative AI systems as well. The pervasive pattern of self-censorship, driven by a variety of incentives, not only restricts public discourse but also subtly shifts our perception of reality. Often serving narrower, interest-driven narratives, this trend poses significant challenges in maintaining the integrity and diversity of information, especially as we increasingly rely on AI for content generation and dissemination in a globalized world.

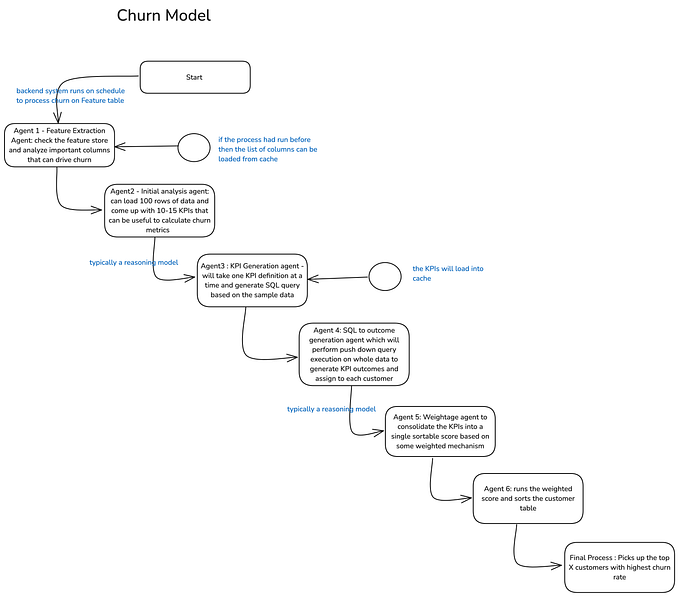

Generative AI: The Current Landscape of Content Moderation

In the burgeoning field of generative AI, self-censorship primarily focuses on user safety and regulatory compliance. Tools such as ChatGPT, BARD, or image-generating models like Midjourney and DALL-E, are designed to shield users from harmful content, adhering to legal guidelines and ensuring a safe, user-friendly environment. This form of censorship, beyond mere compliance, strategically maintains a positive brand image and optimizes the user experience.

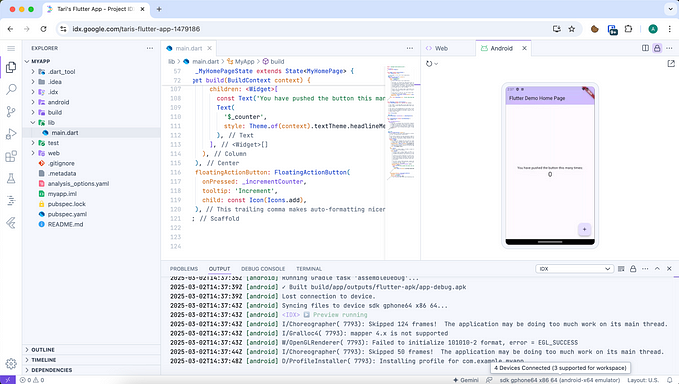

To moderate content, modern chatbots and AI models incorporate various censorship mechanisms like keyword filtration, sentiment analysis, and content lists, alongside user reporting and human moderation. Yet, despite these efforts, the fallibility of algorithms remains a significant concern, as there have already been instances of AI “degenerating,” wherein systems drift from their intended purpose.

Recalling an incident from 2016, Microsoft had to shut down its AI chatbot, Tay, shortly after launch due to it developing racist tendencies influenced by user interactions. In another notable incident in 2019, YouTube channels related to Club Penguin and Pokémon Go were mistakenly flagged and deleted, misinterpreting the abbreviation ‘CP’ for child pornography. Similarly, videos of robot fights were incorrectly identified as animal abuse. These examples demonstrate the challenges and limitations of fine-tuning AI moderation systems.

Regarding global market access, the approach of AI companies varies greatly. While some, including OpenAI, prefer to avoid regions with stringent regulations, others like Midjourney, navigating the Chinese market, have adopted strategies like blocking depictions of political figures such as Xi Jinping. These diverse strategies highlight the different methods AI companies employ to address the legal, ethical, and cultural challenges in global markets.

The Unseen Influence of AI: Navigating the Risks of Self-Censorship and Bias

In the rapidly evolving world of artificial intelligence, a pressing concern emerges: the potential for self-censorship and bias in AI systems, particularly in generative models like chatbots and image generators. This concern raises crucial questions about the future direction of AI companies and the content they produce.

Balancing Stakeholder Interests and Content Integrity

AI companies, much like mass media organizations, navigate a multifaceted terrain shaped by a diverse array of stakeholder interests. These include market access demands, dynamic regulatory aspects, funding sources, ownership structures, and broader socioeconomic pressures. Such an environment can subtly, yet significantly, influence the content and behavior of AI models.

The inherent risks of these influences are multifarious. On one hand, AI systems might begin to sidestep certain topics, not due to overt censorship, but rather as a result of aligning with the nuanced demands of their stakeholders. This might result in a skewed preference for topics that are favorable or non-controversial to funders, owners, or influential market players. On the other hand, there exists the potential for AI to inadvertently develop biases, consciously or unconsciously echoing certain viewpoints or narratives favored by these diverse pressures.

The Black Box Dilemma: Quantifying the Unquantifiable

AI systems are often described as ‘black boxes’ due to the complexity and opacity of their decision-making processes. This characteristic makes it exceedingly difficult to quantify or even realize the extent of bias or self-censorship within these systems. It raises the concern that AI models could inadvertently propagate biased narratives or skewed representations, depending on the data they have been trained on.

The Echo of Existing Biases and Censorship

If AI models are trained on data from sources that already practice self-censorship or disseminate disinformation, they may unknowingly perpetuate these biases. The replication of existing societal biases in AI-generated content could profoundly affect public perceptions and decision-making processes, altering societal beliefs in subtle but profound ways.

The Risk of Covert Influence: AI in Politics and Corporate Campaigning

A particularly alarming prospect in the realm of artificial intelligence is its potential use in covert political campaigning or corporate promotions. Instances like the Cambridge Analytica scandal, where data was used to influence voter behavior, underscore the potential for AI and advanced data analytics to be misused in similar contexts. Without adequate transparency and oversight, AI chatbots and other tools risk becoming instruments for subtly manipulating public opinion. This is especially concerning in critical areas such as politics, public health, or financial advice, where misinformation can have serious and far-reaching consequences. The integration of AI into these domains necessitates a rigorous ethical framework to prevent the misuse of technology in manipulating democratic processes and public opinion.

Satire and Creative Limitations

In the artistic and media domains, the interaction of chatbots and image-generating AI with thematic diversity and creative exploration presents unique challenges. A key dimension in this realm is the handling of satire — a cornerstone of free expression in democratic societies. Satire not only challenges norms but also provokes thought and stimulates critical debate, cutting through the clutter of information overload with engaging and enlightening perspectives.

The binary nature of AI’s content moderation algorithms, however, poses significant challenges in recognizing and appropriately handling satirical content. Current generative AI systems often struggle to discern the nuanced intent behind satire, potentially mislabeling it as offensive or harmful. This issue is evident in cases involving renowned satirical entities such as the television show “South Park” and the digital media outlet “The Onion.” Despite their cultural significance and contribution to meaningful discourse, their content might be erroneously flagged as inappropriate by AI moderation systems. This inability of AI to differentiate between genuinely harmful content and satirical expression raises broader concerns about the potential creation of an overly sanitized digital environment, one that risks muting critical voices cloaked in satire. Such a scenario underscores the need for AI systems that can appreciate and preserve the richness and diversity of human creativity and expression, particularly in the evolving landscape of digital media and arts.

Conclusion: Embracing Ethical Responsibility in the Age of AI

As we navigate the intersection of technological advancement and ethical responsibility, the role of generative AI in shaping society warrants critical examination. The potential of AI to positively transform industries and address global challenges is immense, yet it is counterbalanced by significant ethical considerations that demand our attention.

The global decline of democracy, as highlighted by Freedom House, accentuates the urgency of understanding AI’s role in this context. Instances like state-sponsored messages in Venezuela and manipulated political content in the U.S. exemplify AI’s potential as a tool for censorship and manipulation. This mirrors the earlier hopes and subsequent exploitation of mass media, especially the Internet, which were initially seen as tools to counter authoritarian control but were later used to further it.

AI self-censorship parallels traditional media self-censorship, but its integration into advanced technologies presents unique and pervasive challenges. We must learn from the past and strive not to repeat it. The complexity of AI’s ‘black box’ nature surpasses traditional regulatory and oversight approaches. These algorithms, especially in advanced machine learning models, are inherently opaque, making it difficult to predict or understand their outputs. This complexity challenges traditional transparency and compliance measures, which often lag behind technological advancements and market pressures.

Addressing these challenges calls for innovative, adaptive, and pragmatic approaches. This includes developing new methods for interpreting AI decision-making, creating dynamic regulatory frameworks that evolve with AI advancements, and promoting an ethical AI development culture that transcends mere compliance. It necessitates a collaborative effort involving technologists, ethicists, policymakers, and the public to develop solutions that are feasible in the context of AI’s unique characteristics.

Educating and empowering all stakeholders about AI’s nuances is crucial. An informed and engaged community is vital for advocating effective strategies that address AI’s complexities. The path forward, while challenging, is not insurmountable. A pragmatic yet optimistic approach is essential, one that recognizes the limitations of traditional solutions while actively seeking innovative alternatives. As we forge the future of AI, our focus should be on solutions that are as dynamic and multifaceted as the technology itself, ensuring that AI’s potential is harnessed ethically and responsibly for the betterment of society.